Introduction

Apache Spark, once a component of the Hadoop ecosystem, is now becoming the big-data platform of choice for enterprises. It is a powerful open source engine that provides real-time stream processing, interactive processing, graph processing, in-memory processing as well as batch processing with very fast speed, ease of use and standard interface.

Apache Spark is a platform for cluster computing. Spark lets you spread data and computations over clusters with multiple nodes(think of each node as a separate computer). Splitting up your data makes it easier to work with very large datasets because each node only works with a small amount of data.

- Spark: General purpose computational framework that substantially improves performance of MapReduce, but retains the basic model :-

- Memory based data processing framework → avoids costly I/O by keeping intermediate results in memory

- Leverages distributed memory

- Remembers operations applied to dataset

- Data locality based computation → High Performance

- Best for both iterative (or stream processing) and batch workloads

Spark Questions

Q. What is Apache Spark?

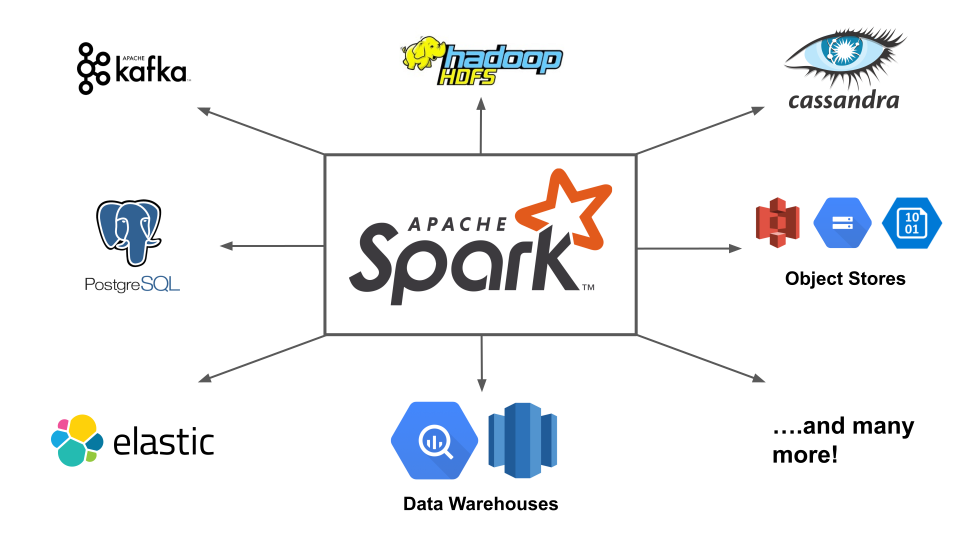

Spark is a fast, easy-to-use and flexible data processing framework. It has an advanced execution engine supporting cyclic data flow and in-memory computing. Spark can run on Hadoop, standalone or in the cloud and is capable of accessing diverse data sources including HDFS, HBase, Cassandra and others.

Q. Explain key features of Spark?

Allows Integration with Hadoop and files included in HDFS.

Spark has an interactive language shell as it has an independent Scala (the language in which Spark is written) interpreter.

Spark consists of RDD’s (Resilient Distributed Datasets), which can be cached across computing nodes in a cluster.

Spark supports multiple analytic tools that are used for interactive query analysis , real-time analysis and graph processing

Q. What is RDD?

Ans. RDD (Resilient Distribution Datasets) is a fault-tolerant collection of operational elements that run parallel. The partitioned data in RDD is immutable and distributed.

Q. Name the different types of RDD ?

Ans. There are primarily two types of RDD – parallelized collection and Hadoop datasets.

Q. What are the methods of creating RDDs in Spark ?

Ans. There are two methods –

By parallelizing a collection in your Driver program.

By loading an external dataset from external storage like HDFS, HBase, shared file system.

Q. What is a Sparse Vector?

Ans. A sparse vector has two parallel arrays –one for indices and the other for values.

Q. What are the languages supported by Apache Spark and which is the most popular one, What is JDBC and why it is popular?

Ans. There are four languages supported by Apache Spark – Scala, Java, Python, and R. Scala is the most popular one.

Q. What is Yarn?

Ans. Yarn is one of the key features in Spark, providing a central and resource management platform to deliver scalable operations across the cluster.

Q. Do you need to install Spark on all nodes of Yarn cluster? Why?

Ans. No, because Spark runs on top of Yarn.

Q. Is it possible to run Apache Spark on Apache Mesos?

Ans. Yes.

Q. What is lineage graph?

Ans. The RDDs in Spark, depend on one or more other RDDs. The representation of dependencies in between RDDs is known as the lineage graph.

Q. Define Partitions in Apache Spark ?

Ans. Partition is a smaller and logical division of data similar to ‘split’ in MapReduce. It is a logical chunk of a large distributed data set. Partitioning is the process to derive logical units of data to speed up the processing process.

Q. What is a Catalyst framework?

Ans. Catalyst framework is an optimization framework present in Spark SQL. It allows Spark to automatically transform SQL queries by adding new optimizations to build a faster processing system.

Q. What are Actions in Spark?

Ans. An action helps in bringing back the data from RDD to the local machine. An action’s execution is the result of all previously created transformations.

Q. What is a Parquet file?

Ans. Parquet is a columnar format file supported by many other data processing systems.

Q. What is GraphX?

Ans. Spark uses GraphX for graph processing to build and transform interactive graphs.

Q. What file systems does Spark support?

Ans. Hadoop distributed file system (HDFS), local file system, and Amazon S3.

Q. What does a Spark Engine do?

Spark Engine is responsible for scheduling, distributing and monitoring the data application across the cluster.

Q. What do you understand by Transformations in Spark?

Transformations are functions applied on RDD, resulting into another RDD. It does not execute until an action occurs. map() and filer() are examples of transformations, where the former applies the function passed to it on each element of RDD and results into another RDD. The filter() creates a new RDD by selecting elements form current RDD that pass function argument.

Q. Define Actions ?

An action helps in bringing back the data from RDD to the local machine. An action’s execution is the result of all previously created transformations. reduce() is an action that implements the function passed again and again until one value if left. take() action takes all the values from RDD to local node.

Q. Define functions of SparkCore?

Serving as the base engine, SparkCore performs various important functions like memory management, monitoring jobs, fault-tolerance, job scheduling and interaction with storage systems.

Q. What is Spark Driver?

Spark Driver is the program that runs on the master node of the machine and declares transformations and actions on data RDDs. In simple terms, driver in Spark creates SparkContext, connected to a given Spark Master. The driver also delivers the RDD graphs to Master, where the standalone cluster manager runs.

Q. Name commonly-used Spark Ecosystems?

Spark SQL (Shark)- for developers.

Spark Streaming for processing live data streams.

GraphX for generating and computing graphs.

MLlib (Machine Learning Algorithms).

SparkR to promote R Programming in Spark engine.

Q. Define Spark Streaming?

Spark supports stream processing – an extension to the Spark API , allowing stream processing of live data streams. The data from different sources like Flume, HDFS is streamed and finally processed to file systems, live dashboards and databases. It is similar to batch processing as the input data is divided into streams like batches.

Q. What is Spark SQL?

SQL Spark, better known as Shark is a novel module introduced in Spark to work with structured data and perform structured data processing. Through this module, Spark executes relational SQL queries on the data. The core of the component supports an altogether different RDD called SchemaRDD, composed of rows objects and schema objects defining data type of each column in the row. It is similar to a table in relational database.

Q. List the functions of Spark SQL.?

Spark SQL is capable of:

Loading data from a variety of structured sources.

Querying data using SQL statements, both inside a Spark program and from external tools that connect to Spark SQL through standard database connectors (JDBC/ODBC). For instance, using business intelligence tools like Tableau.

Providing rich integration between SQL and regular Python/Java/Scala code, including the ability to join RDDs and SQL tables, expose custom functions in SQL, and more.

Q. What are benefits of Spark over MapReduce?

Due to the availability of in-memory processing, Spark implements the processing around 10-100x faster than Hadoop MapReduce. MapReduce makes use of persistence storage for any of the data processing tasks.

Unlike Hadoop, Spark provides in-built libraries to perform multiple tasks form the same core like batch processing, Steaming, Machine learning, Interactive SQL queries. However, Hadoop only supports batch processing.

Hadoop is highly disk-dependent whereas Spark promotes caching and in-memory data storage.

Spark is capable of performing computations multiple times on the same dataset. This is called iterative computation while there is no iterative computing implemented by Hadoop.

Q. What is Spark Executor?

When SparkContext connect to a cluster manager, it acquires an Executor on nodes in the cluster. Executors are Spark processes that run computations and store the data on the worker node. The final tasks by SparkContext are transferred to executors for their execution.

Q. Name types of Cluster Managers in Spark?

The Spark framework supports three major types of Cluster Managers:

Standalone : a basic manager to set up a cluster.

Apache Mesos : generalized/commonly-used cluster manager, also runs Hadoop MapReduce and other applications.

Yarn : responsible for resource management in Hadoop

Q. What do you understand by worker node?

Worker node refers to any node that can run the application code in a cluster.